Source: Blockonomi article “Micron (MU) Stock Soars 14% as Memory Shortage Drives Massive Rally” by Trader Edge, published May 8, 2026. Micron Technology’s stock (MU) surged 14% in a single trading session after investment firm DA Davidson issued a staggering $1,000 price target. The rally is directly fueled by a severe, AI-driven global memory shortage, with High Bandwidth Memory (HBM) capacity sold out through 2026 and DRAM prices spiking 57% in April 2026 alone. This isn’t just a financial story; it’s a critical signal for the AI content creation industry, highlighting a fundamental hardware constraint that will impact the cost, scalability, and infrastructure of automated content workflows.

The AI Hardware Crunch: Why Memory is the New Gold

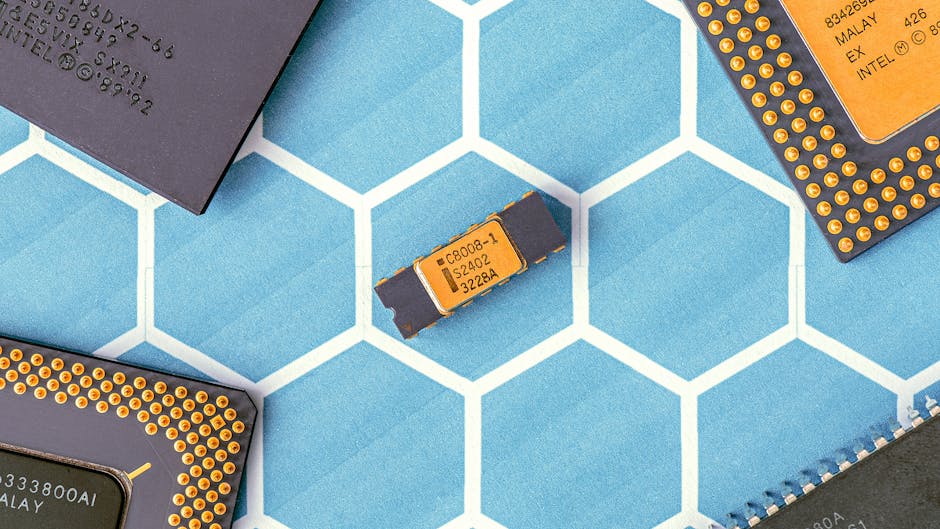

The core driver of Micron’s rally is a supply-demand imbalance of historic proportions in the semiconductor memory market. The explosion of generative AI model training and inference has created an insatiable appetite for two specific types of memory: High Bandwidth Memory (HBM) and DRAM. HBM, a specialized stack of memory chips placed directly next to a GPU or AI accelerator, is essential for feeding data-hungry AI processors at extreme speeds. DRAM is the high-speed working memory used in all computing, from data center servers to your local AI workstation.

According to industry reports cited in the original article, HBM capacity is completely sold out through the end of 2026. This isn’t a short-term hiccup; it’s a multi-year backlog. Major AI hardware players like NVIDIA, AMD, and cloud providers (AWS, Google Cloud, Microsoft Azure) have locked in supply contracts years in advance, leaving little for new entrants or scaling projects. Concurrently, the spot market for DRAM saw prices skyrocket by 57% in April 2026, indicating frantic purchasing and scarcity.

For AI content creators, this translates to a direct cost increase in the foundational layer of their operation: compute. Running large language models (LLMs) like GPT-4, Claude 3, or open-source alternatives requires significant GPU memory (VRAM). More memory allows for larger batch sizes, longer context windows, and more complex reasoning—all factors that improve output quality and efficiency. As the price of the underlying hardware components (GPUs, AI servers) rises due to memory costs, so too will the price of cloud AI APIs (OpenAI, Anthropic) and the cost of leasing or building private AI infrastructure.

Impact on AI Content Creators: The Coming Cost Squeeze

The memory bottleneck will manifest for content professionals in several tangible ways over the next 12-24 months.

1. Rising API and Cloud Service Costs: The major AI service providers are not immune to hardware inflation. As their costs for procuring and operating AI-optimized servers (loaded with HBM and DRAM) increase, they will pass these costs onto consumers. Expect the per-token pricing for models like GPT-4-Turbo or Claude 3 Opus to see incremental hikes. For agencies and high-volume creators using millions of tokens per month, this will directly impact profit margins.

2. Stagnation in “Context Window” Arms Race: One of the key competitive differentiators among AI models has been the size of the context window (e.g., 128K, 1M tokens). A larger context allows the AI to process entire long documents, maintain coherent multi-step instructions, and produce more nuanced content. However, scaling context windows is massively memory-intensive. The hardware shortage may slow the rollout of next-generation models with exponentially larger contexts, as the physical memory to run them efficiently becomes prohibitively expensive or simply unavailable.

3. Barriers to Entry for Local AI: Many advanced creators have moved to running open-source models (like Llama 3, Mixtral) locally on their own hardware for cost control, privacy, and customization. The dream of a powerful, affordable local AI workstation is hitting a wall. High-end consumer GPUs like the NVIDIA RTX 4090 (24GB VRAM) are already premium items. If memory chip prices drive up the cost of future generations of these cards, the ROI for a local setup diminishes, pushing more users back to the cloud and its variable costs.

4. Slowdown in AI Tool Innovation: Startup AI tool developers building the next Jasper.ai or Copy.ai rely on affordable API access or their own leased infrastructure to experiment and scale. A hardware-driven cost increase acts as a tax on innovation, potentially slowing the pace of new feature development and forcing a focus on efficiency over experimentation.

Practical Strategies for AI Content Creators to Mitigate Risk

Proactive content strategists can implement several tactics to hedge against rising AI compute costs and maintain sustainable workflows.

1. Audit and Optimize Token Usage: This is low-hanging fruit. Use tools within your AI platform (like OpenAI’s usage dashboard) or third-party analytics to identify waste. Are you using overly verbose system prompts? Can you achieve the same result with a smaller, more efficient model (e.g., GPT-4o-mini instead of GPT-4-Turbo for first drafts)? Implement strict token budgeting for routine tasks.

2. Embrace Hybrid and Cached Workflows: Don’t use a sledgehammer for every nail. Develop a tiered content strategy. Use high-cost, high-intelligence models (Claude 3 Sonnet, GPT-4) only for critical tasks like strategic ideation, complex editing, and compliance checking. Use cheaper, faster models for bulk drafting and simple rewrites. Furthermore, implement caching systems. If you generate a “10 best tips for X” article that performs well, store that core structure and reuse it with AI-assisted variations, rather than generating entirely net-new content from scratch every time.

3. Invest in Prompt Engineering & Fine-Tuning: The most cost-effective token is the one you don’t have to generate. Superior prompt engineering—crafting clear, concise, and structured instructions—reduces hallucination, cuts down on revision cycles, and gets you to final-quality output faster. For specialized use cases (e.g., generating product descriptions in a specific brand voice), investigate fine-tuning a smaller open-source model. The upfront cost of fine-tuning is offset by dramatically lower per-inference costs forever after, and fine-tuned models often require less context, saving memory.

4. Leverage Content Automation Platforms with Built-in Efficiency: Platforms like EasyAuthor.ai are designed specifically for efficient, scalable AI content production. They automate the entire workflow—from keyword research and SEO briefing to multi-model generation, internal linking, and WordPress publishing—within a controlled environment. This reduces the “friction cost” of manual API calls, prompt copying, and format switching. A unified platform can implement efficiency protocols (model selection, prompt templates, content recycling) at a systemic level, ensuring your entire operation is optimized for cost-per-piece.

5. Lock in API Rates and Explore Alternative Providers: If you have predictable, high-volume usage, contact your AI API provider about enterprise or committed-use contracts that may offer price protection. Simultaneously, diversify your model portfolio. Explore providers like Google’s Gemini, Anthropic’s Claude, or open-source model endpoints (via Together AI, Replicate) to compare pricing and performance for your specific needs. Avoid vendor lock-in.

Conclusion: Efficiency as the New Competitive Advantage

The Micron stock surge is a canary in the coal mine for the AI content industry. The era of treating AI compute as an infinitely cheap and scalable resource is ending. The next phase will be defined by strategic efficiency, smart workflow design, and a keen understanding of the underlying hardware economics. For professional content creators and agencies, the winners will be those who optimize their token consumption, master hybrid model strategies, and leverage integrated automation platforms to do more with less. The memory shortage is not just a chipmaker’s boom; it’s a mandate for the entire AI content ecosystem to mature, prioritize sustainability, and build moats around operational intelligence. Start adapting your workflows today, because the cost of AI-powered creativity is on the rise.